Hey, it's Amit here again.

Over the next three years, SEO traffic is likely to become less predictable.

Not because search is dying - but because discovery is spreading across more surfaces.

The mistake most teams will make is trying to protect traffic, instead of reinforcing the signals that still compound when traffic fragments.

Why this conversation matters now

The Reuters Institute recently published its 2026 Journalism, Media & Technology Trends report.

A key theme running through it:

many publishers expect a 40% decline in search traffic over the coming years.

Reuters study using data from Chartbeat

That expectation isn’t unreasonable.

AI answers reduce clicks.

Social referrals are less reliable.

Discovery is no longer concentrated in one place.

But this isn’t a prediction about relevance disappearing.

It’s a prediction about distribution changing.

Search isn’t collapsing - it’s fragmenting

People still:

research

compare

validate

look for second opinions

They’re just doing it across more places:

Google, AI tools, YouTube, editorial sites, communities.

Which changes the question from:

“How do I protect traffic?”

to:

“What signals survive when traffic becomes unreliable?”

Not to mention that much of the traffic sites were getting - were probably not converting anyway. I’d argue much of it was as a result of SEO strategy that was a bit “vanity metric” heavy - understandable in a world where we were all being KPI’d on YoY traffic growth.

Gaetano Di Nardi summed it up well in his article on SearchEngineLand about “The Great Normalization”:

Let’s be real: most websites never deserved those clicks in the first place. They were either topically irrelevant or played a questionable role in influencing conversions.

Information gain is now the baseline

In this environment, surface-level content doesn’t hold up.

Covering the obvious answer isn’t enough.

Repackaging consensus isn’t enough.

To maintain visibility - human and AI - content increasingly needs to:

add something new

anticipate follow-up questions

cover adjacent angles and fan-outs

demonstrate real audience understanding

This is no longer about rankings alone.

It’s about teaching systems what your content is actually for. This is straight from the patent for information gain.

What is information gain

How it influences search results

But content alone rarely carries authority

Even strong content often struggles on its own in competitive spaces.

Modern search systems don’t just ask:

“Is this good?”

They ask:

“Who else treats this as credible?”

I’d argue that for the foreseeable future, it's not possible for search (be it AI search or traditional search)to be completely reliant on on-page signals - it MUST always have something external to the website’s own self.

That’s where off-page signals come back into focus.

Off-page signals are the stabiliser

As clicks become less predictable, new link heuristics are popping up.

Now, since HCU and when AI came onto the scene, one of the loudest is the idea that “links on pages with traffic are the best links” - because traffic supposedly signals quality.

It sounds neat.

It also starts to fall apart quickly.

If SEO traffic declines or fragments into AI interfaces, how reliable is any traffic estimate long-term?

Side story: I personally know of an agency who was selling “page traffic links” - and an employee of theirs later admitted to me privately that they would just pick buy links on link farms, because the page had traffic (without screening the keywords it had traffic from)

People in the agency questioned it with the founder but were told “just build it, the client doesn’t check anyway”

Take from that story what you will…

So, traffic is an output, not a cause.

Search systems don’t reward pages purely because they get traffic.

They reward pages because they’re relevant, trusted, and well-positioned within a topic - which may result in traffic.

Optimising for traffic as the proxy means optimising for something increasingly unstable. So be careful not to fall into that trap.

Where the “semantic link” argument breaks

You’ll also hear people talk about semantic links - links placed in relevant content.

That direction is broadly correct.

But many stop too early.

If anyone can place a link on that page via payment, templating, or mass outreach, the semantic value decays fast.

The real question is:

Can this link only exist if the content genuinely belongs there?

That constraint - editorial judgment, audience fit, topical depth - is the actual signal.

And of course, if the content itself follows the same practices we already discussed in our previous newsletters like information gain, its on a site with brand signals, etc.

Why this holds up even in a reduced-click world

AI systems don’t need clicks to infer relevance.

They learn from:

where you’re mentioned

the topics you’re associated with

the entities you appear alongside

how consistently that association shows up

A highly relevant link or mention on a low-traffic page can still matter - because it teaches the system what you are about, not just who visited you.

That’s why obsessing over traffic alone is fragile.

And why the safest approach remains:

Build links and mentions on pages and domains that are hard to access unless your content genuinely fits.

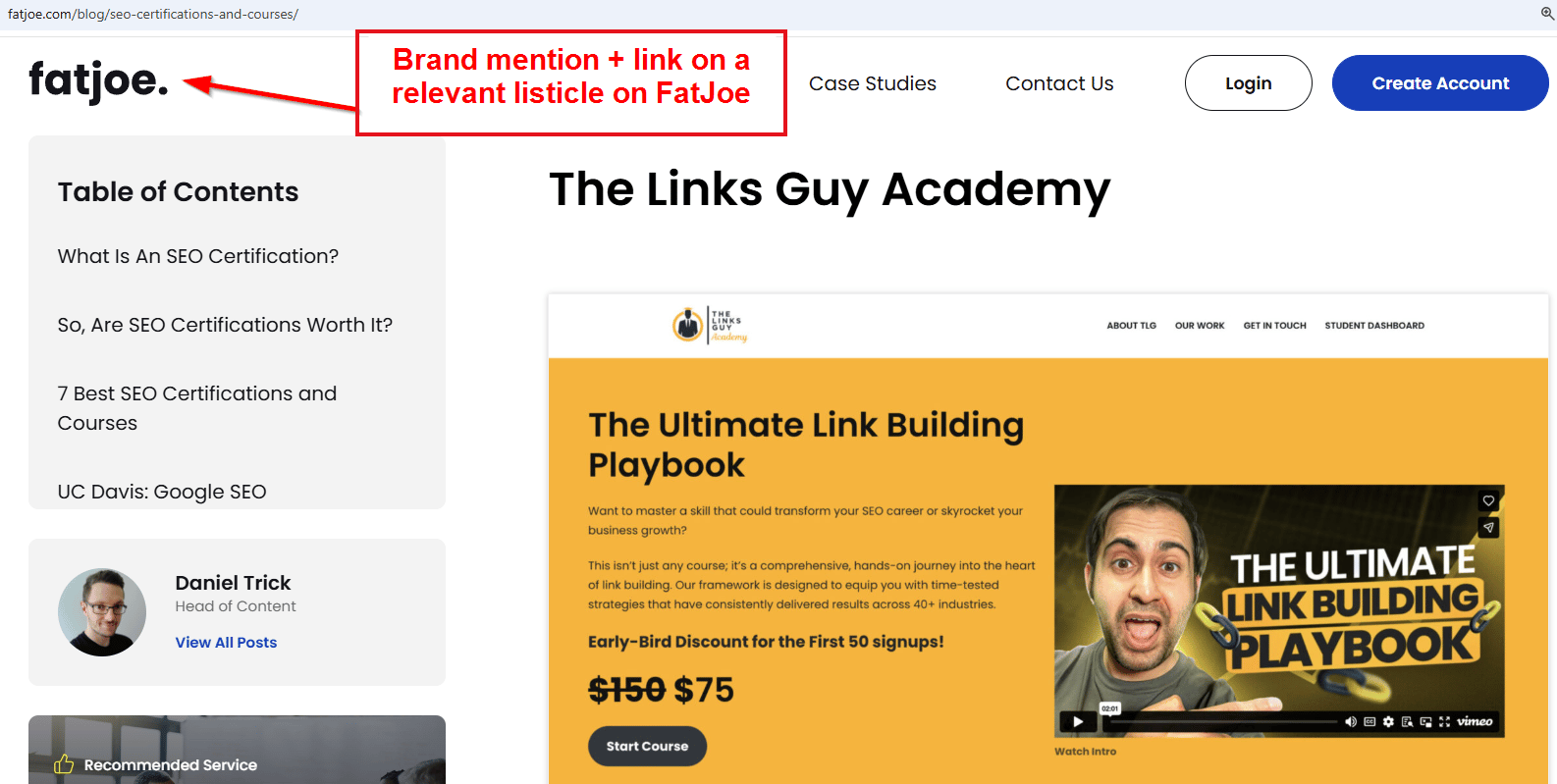

A quick example from my own site

Recently, I refreshed one of my higher-competition pages around link building courses. Not only did I explain every course, but I talked about specifics, that NOBODY else seemed to talk about on their listicles. It was stuff only I could have known, because I either took the course, or I know people who have.

That on-page work helped.

But the real movement came only when I did off-page work as well.

The page or entity was also reinforced with:

a relevant industry mention (shown below)

A couple of contextual backlinks

That’s when things shifted.

Rankings improved.

And more interestingly, LLM-driven click-through increased too AND it appeared on the AI Overview.

Not instantly.

Not cleanly.

But cumulatively.

(Looker Studio - LLM traffic after content refresh + off-page reinforcement)

ChatGPT + perplexity sessions

(Making on appearance on the AI Overview on Google USA for “link building training”)

Visibility on the AIO as well

What to do next (practically)

If traffic becomes less predictable, the response isn’t panic.

It’s resilience.

That means:

producing content with genuine information gain

reinforcing it with selective, relevant off-page signals

building visibility across more than one discovery surface (YouTube, LinkedIn, Email newsletter, etc)

I still recommend you or someone on your team getting hands-on with things like HARO-style pitching - because it teaches you what editors and systems actually reward.

👉I’ve got a free guide on The Links Guy covering this in detail: HARO Link Building Guide

👉 The Links Guy Academy goes deeper, with real examples and breakdowns.

The takeaway

Traffic may fragment over the next three years.

Authority still compounds.

The teams that understand that difference - and act on it - will feel the shift far less than everyone else.

Amit Raj

The Links Guy

P.S.

The people who adapt early won’t “lose traffic” - they’ll just stop depending on it.